Defensive play is a very interesting topic in hockey. Many people have their own opinions on what good defensive play is and how it should be assessed. There’s a huge variety of stats and tools both fans and teams use.

This article will be going into multiple methods on how to evaluate skater defence and how effective they are, ranging from analytics to the eye test.

Box Score Stats (giveaways, blocked shots, etc)

Giveaways/Takeaways

Giveaways and takeaways are a weird stat. Conceptually it makes sense, but there are multiple problems with them.

They tend to penalize players who play a lot of minutes and who have the puck a lot

Stats like giveaways/takeaways don’t display results (I will go more into this later in the article)

There isn’t always a lot of correlation between giveaways/takeaways and preventing goals and chances. Connor McDavid leads the league in takeaways over the past 3 years, but he’s still on ice for a high quantity of scoring chances and goals against, and you usually see a lot of defencemen that play heavy minutes near the top of giveaway totals (e.g. Petry, Ekblad, Brodie, etc.). While many good defensive players do have good giveaway/takeaway totals, just because they excel in this stat, it doesn’t necessarily mean they excel in their end.

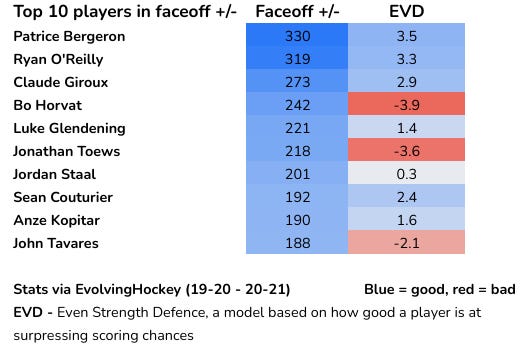

Faceoffs

Similar to giveaways and takeaways, the problem with faceoffs is that there are a lot of players with good faceoff stats, but they're still poor defensive players and have bad possession numbers. There are many great defensive players that have good faceoff numbers (e.g. Bergeron and O’Reilly), but that doesn’t necessarily indicate that a player is good defensively or that they are good defensive players.

There are certain scenarios where faceoffs are very necessary, especially in scenarios like 3v3 OT/start of a power play/penalty kill. While faceoffs are something that’s a good and useful skill for players to have, it doesn’t necessarily mean that player is a good defensive player. Good defensive players can have good faceoff stats, but just because a player has good faceoff stats, it doesn’t mean they’re good defensively.

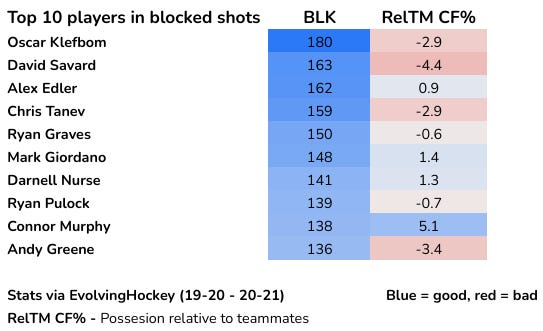

Blocked Shots

Most players with a lot of blocked shots are usually stuck in their own zone a lot, which is the reason why they have so many blocked shots.

You can argue blocked shots can show numerous things, like the bravery/willingness to block shots of a player. One thing they do not display is defensive ability. Blocked shots do technically prevent a shot from hitting the goalie, and players with lots of blocked shots can still have good high-quality chance suppression stats, like David Savard. Savard is one of the best players in the league at preventing QA (quality against).

But is it worth having to spend most of your time in the defensive zone when that player is on-ice?

Plus/Minus

To make it short and simple, plus/minus is a very unreliable statistic. It includes empty net and shorthanded goals (which can penalize star players), and it can be influenced by terrible goaltending, teammates, competition, zone starts, etc. It shouldn’t be used to evaluate players at all.

Microstats

Microstats are basically advanced stats but in terms of individual plays. You can maybe even call them a different version of the eye test. They include things such as zone entries and exits, zone entry denials, dump-ins, fore-checking pressures, etc. Most microstats aren’t publicly available and private companies (e.g. SportLogIQ) track their own microstats, and NHL teams end up using them. Corey Sznajder does a great job at manually tracking some of them and making them available to the public, and I’d recommend subscribing to him.

The analytics community uses on-ice stats much more, but you’ll still see microstats being used a fair bit.

I think microstats are useful, but they shouldn’t be used as the only way to evaluate defence. Microstats just show certain areas in which a player excels, but they don’t show if the player ultimately did prevent scoring chances/goals in the end. In my opinion, it shouldn’t be used to display results since they don’t do that.

A good example of what I mean is how some Leafs fans were using certain microstats to say that Auston Matthews should be a Selke candidate.

These things are very useful, but Matthews’ on-ice impact on denying scoring chances and goals hasn’t been Selke level. His defence has been good, as his impact has been above average, but not Selke worthy. It’s a great thing to have lots of puck battle wins, stick checks, etc. They’re part of good defensive play, but they aren’t all of it, and they don’t show if Matthews ultimately did help the Leafs prevent scoring chances. The Leafs allow scoring chances at a higher rate with Matthews on the ice and allow them at a lower rate with him off of it.

Again, microstats are a useful tool, but they shouldn’t be used as a replacement/substitute for actual defensive results.

Eye Test

In my opinion, there are two types of the “eye test,” or in other words, watching the games. One is actual video analysis, where people go back, re-watch multiple games, and look at all aspects of a player’s performance. I’m in support of this kind of eye test, and I think it’s very useful.

Then there's the eye test that fans use as an argument when stats are against them, so they may respond with “watch the games, stats aren’t everything.” That eye test always seems like it’s based more on reputation, point totals, and personal bias.

I have multiple problems with this type of eye test. To start off, it’s too subjective, and we can have a lot of biases towards certain events/players. At the end of the game, will you remember the little plays, like winning puck battles, small breakout passes, coverage/positioning, etc? Or will you remember the big, flashy plays like a player splitting the D, getting a scoring chance, making a smooth cross-ice pass, etc? For almost all of us, the latter will be more memorable. Flashy, offensive plays are much more exciting and noticeable, while the smaller plays sometimes aren’t.

This video is an example of what I mean by “little plays.” Ethan Bear’s small, but very important defensive play, and then his smart, nifty pass led to the goal. He didn’t get a point on it, but without that play that led to the breakout in the first place, the Oilers don’t score (this is also why I think points are flawed, goals for and against can be impacted in more ways than just getting the last touch on it). This one is a bit more noticeable since it directly led to a goal, but plays like this can lead to scoring chances, possession, etc, all the time. We don’t pay as much attention to them as we would with goals or flashy offensive plays.

Even if you somehow could notice each play, could you remember it all for each and every player at the end of a season? Hockey is a complex game; it can be difficult to process the plays and performance of all 10 players on the ice at a time, and then remember how every player played at the end of the year using only our eyes. This is especially true when it comes to defence, and most people end up judging defensive play on their reputation, time on ice, or box score stats.

My point isn’t that the eye test is useless. It’s literally just watching the game, and there’s a lot of value in that. You can get a general idea of a player in some aspects, like some offensive skills (e.g. passing, puck-moving, shooting, etc), but there are still lots of details we miss out on, especially in the case of a player’s play without the puck and overall defensive play.

To add on, good defence isn’t only things like poke checks and puck battle wins, it’s also preventing shots and chances from happening in the first place. It’s kind of hard to quantify shot prevention using our eyes.

If you focus on just one player, you can get a better idea of that player and the little plays become more noticeable. This type of video analysis is very useful, and we can see more clearly how a player is exactly playing and how they’re achieving their statistical results.

But to try to process and accurately evaluate each and every player’s performance can be difficult. It’s basically impossible to make an accurate conclusion on a player using our eyes and eyes alone throughout the entire season for the average fan, especially as most of us only watch hockey as entertainment.

Macrostats/On-Ice Stats

Macrostats, or in other terms, on-ice stats, are the best way to evaluate skater defence in my opinion. On-ice stats are the events that happen when a certain player is on the ice, such as goals and shots. They’re used to see what kind of impact a player has on their team in terms of generating/preventing goals and scoring chances. Analytic models, such as EvolvingHockey and HockeyViz, run a ridge regression to adjust each player’s on-ice stats for factors such as quality of teammates, quality of competition, score and venue, etc (HockeyViz goes further and also adjusts for coaching). This helps us find each player’s isolated impact on their team’s performance offensively and defensively.

You can argue that on-ice stats for players are just a team stat; it doesn’t speak to an individual player’s ability, and it’s only how a team performs. But, if Team A is always doing better on a consistent basis over a large sample when Player A is on the ice, and Team A consistently does worse without him, it’s not a simple coincidence. Every player plays with a variety of different teammates throughout the course of a season, and every player has different on-ice stats.

You may also argue that only players on good teams will have good on-ice results, but many players on bad teams can have solid on-ice stats. Some examples of players with good on-ice stats on bad teams include Detroit’s Anthony Mantha, Ottawa’s Brady Tkachuk, LA’s Anze Kopitar, etc.

Raw on-ice stats can be somewhat a team stat, but once isolated for factors that could influence them, it’s a solid tool to judge a player’s impact on how their team plays. As stated before, goals for can be impacted by more than just the last guy that had a touch on it, and goals against can be impacted by more than just a coverage error or turnover right before it. That’s why overall on-ice stats over a large sample are useful; they literally describe how well a team plays with that player, and if that player is impacting goals and scoring chances or not.

In the end, most players with good defensive reputations still have good defensive macrostats (Bergeron, Couturier, Stone, etc), so it’s not like on-ice stats are *that* far off from the public opinion.

There are multiple on-ice stats that can be used to judge defensive play.

GA (goals allowed)

Goals allowed are a weird stat. I don’t like using them a lot, because they are highly influenced by the goalie and can be problematic for evaluating the defence of a skater. GA will always have the goalie involved, as it’s hard to separate the goalie’s performance from the skater’s performance. A good example: Corey Crawford had a .917 SV% playing on the Chicago Blackhawks in 19-20, the team with the most high danger chances allowed in the league. His GSAx (goals saved above expected) was 2nd in the league.

When you look at his (former) teammate Patrick Kane’s defensive numbers, you’ll see that he has a positive impact on goals allowed (per EvolvingHockey) in 19-20. In that regard, it looks like he was a solid defensive player. But his xGA (expected goals allowed) was near the bottom of the league. His GA was a positive because Crawford was making lots of saves and covering up for his mistakes.

GA can be useful over multiple seasons, but it’s too influenced by goaltending for it to be used to evaluate skater defence in my opinion.

xGA (expected goals against) and CA (shot attempts against)

Expected goals and Corsi tend to have a bad reputation, so I’ll break them down in a simple way here. Expected goals are basically built by putting a certain weighting for each unblocked shot attempt. It’s mostly based on shot location/high danger areas. For example, a simple, low danger shot taken from the blue line is usually 0.01-0.02 expected goals, but if it’s from a high danger area, it’s about 0.2-0.3+ expected goals. It’s good at evaluating and judging the quality of shots.

This image (via Natural Stat Trick) shows different areas and the probability of a puck going on. Unblocked shot attempts that are taken closer to the net or in the slot have a higher probability, therefore meaning they’ll have a higher xG weighting.

Private expected goal models (that NHL teams use) calculate xG using more factors than just shot location. But xG models available to the public are still fairly accurate in predicting actual goals.

Some people assume xG is only used for theoretical/predictive purposes or even a substitute for actual goals, but this isn’t the case. In terms of skaters, it’s just used to see how good a player is at play-driving and how good they are at generating and suppressing quality shots. They can also be used to see if a goalie is saving more than they are expected to (e.g. Goalie A allowed 2 goals, and their team allowed 2.5 expected goals, so the goalie saved 0.5 goals above expected).

It isn’t just used for future performance, it’s also used to evaluate past performance.

CA (shot attempts against) is used to see the quantity of shots against, and also possession. I prefer xGA over CA because xG factors in quality. CA is just plain shot attempts, but in my opinion, both are very solid ways to judge defence. There are other on-ice stats too, such as FA (unblocked shot attempts), SA (shots against), HDCA (high danger chances against), etc.

Conclusion

Like I stated before, I think macrostats are the best way to evaluate defensive play. They show the results of a player and if they’re actually impacting the team in terms of denying scoring chances. There are a few problems with them, though; they only show the player’s results, and not how they achieved them.

That’s where video analysis and microstats come in. A good example of my point is Valeri Nichushkin, a very controversial player among the analytics/anti-analytics community. Last season, the twins that ran EvolvingHockey and made the GAR model made the claim in February that Nichushkin was having a better season than eventual Hart winner Leon Draisaitl on Twitter. Draisaitl ended up having a better year when the season paused per their model, but the fact that they were close, confused people who didn’t follow analytics (like me at the time).

The reason why they were close was because of one thing: defence.

Draisaitl was one of the worst defensive players in the league per their model, while Nichushkin was apparently playing Selke level defence. But how?

@JFreshHockey went into a deep dive covering Nichushkin and tried to figure out why he had these results (I would recommend reading his articles, he does great work), and by using the eye test/video analysis, he came to the conclusion that a large reason for why Nichushkin had these results was due to forechecking. His strong forechecking and hockey IQ helped to keep play going and keep the puck pinned in the offensive zone, causing less time in the defensive zone. He would also be tenacious when pressuring a defenceman trying to break out of the zone. This is backed up by excellent forechecking microstats.

Now, this may not be the definition of defence for some people, but the fundamental concept of defence should be to prevent scoring chances and make life easier for the goalies. Nichushkin did just that; he helped his team to spend less time in his own end and allow fewer chances. Nichushkin obviously isn’t a better player than Draisaitl (or even close), and he wasn’t close to an MVP candidate last year. But he deserves recognition for this strong defensive play, which has continued this season. There are also other strong forechecking players that play similarly to Nichushkin and get great defensive results, such as Marcus Foligno, Zach Aston-Reese and this year, Jesse Puljujarvi.

That’s how I would evaluate defence: use macrostats/on-ice stats to see and judge how good a player is defensively, and use microstats and video analysis to see why that player achieved those defensive results.

Find me on Twitter (@KoskiWin)